Quick answer: AWS cloud cost optimization is the ongoing practice of reducing waste and improving spend efficiency across Amazon Web Services infrastructure, including EC2, S3, RDS, and data transfer. The core levers are rightsizing overprovisioned resources, extending commitment coverage (Reserved Instances and Savings Plans), automating non-production shutdowns, and building cost allocation that connects AWS spend to the teams and products generating it. Most organizations can reduce AWS costs 20–30% without changing their architecture.

AWS is the world’s largest cloud provider, and for most engineering organizations, it’s also the largest and least-visible line item in the IT budget. Raw billing data from AWS Cost Explorer shows what you’re spending by service. It rarely shows you who’s spending it, what product or feature it’s funding, or whether the expense is generating proportional business value.

AWS cloud cost optimization closes that gap. This guide covers the strategies, tools, and best practices that finance and engineering leaders use to reduce AWS spend without compromising performance, and how to measure whether the savings are actually improving efficiency or just cutting capability.

What Is AWS Cloud Cost Optimization?

AWS cloud cost optimization is the continuous process of matching AWS resource consumption to actual workload requirements, eliminating waste, and improving the ratio of business value delivered to dollars spent on AWS infrastructure.

It’s distinct from simple cost cutting. Reducing AWS spend by decommissioning unused resources is cost reduction. Optimizing means reducing waste while protecting, or improving, the engineering velocity and business output those resources support. The measure of success isn’t how much you cut. It’s whether your cost per customer, product, feature, cloud, or model is improving as the business scales.

AWS cloud cost management is the governance layer that makes optimization systematic, the tagging strategies, allocation models, budget alerts, and cross-functional workflows that ensure optimization efforts compound over time rather than requiring repeated one-time interventions.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

Why Are AWS Costs Hard To Control?

AWS billing is structured around how Amazon charges, by service, region, resource type, and usage, not around how your business is organized. A single product feature might draw from EC2, RDS, S3, CloudFront, Lambda, and data transfer simultaneously. Without dedicated attribution tools, integrating that spend to the team or product that generated it is nearly impossible using native AWS tools alone.

AWS Cost Explorer provides useful top-level visibility. But it shows totals and service-level breakdowns, not cost by team, product, customer, or feature. That gap between billing data and business context is where most AWS cost optimization programs stall. CloudZero’s 2024 State of Cloud Cost report found that only 30% of organizations know exactly where their cloud budget is going.

Three structural factors compound the problem. Shared infrastructure resists clean allocation — costs pool across teams and products with no natural ownership boundary. Untagged or untaggable resources accumulate in catch-all buckets that tools can’t attribute. And multi-account architectures create organizational cost pools that obscure which team or product is actually responsible.

Any serious AWS cloud cost optimization effort has to address all three, not just run rightsizing recommendations from Trusted Advisor.

What Are The Best AWS Cloud Cost Optimization Strategies?

The following strategies are organized by impact speed, from those that generate results within days to those that require more structural work but produce more durable savings.

1. Rightsize overprovisioned EC2 instances

Overprovisioning is the single largest source of AWS waste for most organizations. Teams request more compute, memory, and storage than workloads actually consume, building in headroom “just in case”, and those idle resources generate cost around the clock.

EC2 cost optimization starts with analyzing actual CPU, memory, and network utilization over a meaningful time period (at minimum 14 days; 30 days is better).

AWS Compute Optimizer and Trusted Advisor both surface rightsizing recommendations. The key data point: a virtual machine running at 15% average CPU utilization for 30 days is a candidate for downsizing to the next smaller instance type.

Rightsizing is not a one-time exercise. Usage patterns shift as products evolve. Organizations that embed rightsizing reviews into regular engineering sprints instead of treating them as quarterly audits, sustain the savings over time.

See CloudZero’s guide to EC2 pricing for a breakdown of instance types and how to evaluate them against workload requirements.

2. Extend commitment coverage with Reserved Instances and Savings Plans

On-demand pricing is the most expensive way to run predictable AWS workloads. Two commitment-based discount programs offer significant savings on steady-state compute:

AWS Reserved Instances (RIs) deliver up to 72% savings on EC2 compared to on-demand rates when you commit to a specific instance type for one or three years. Standard RIs offer higher discounts; Convertible RIs offer more flexibility to exchange instance types during the term.

AWS Savings Plans offer up to 66% savings (Compute Savings Plans) or 72% (EC2 Instance Savings Plans) in exchange for a $/hour spend commitment over one or three years. More flexible than RIs, they apply across instance families, regions, and services including Fargate and Lambda.

The discipline is matching commitment coverage to actual stable workload patterns.

Overcommitting creates its own waste — locked-in capacity that goes unused if workloads change. The goal is coverage on the predictable base load, with on-demand pricing retained for variable or experimental workloads.

For a detailed comparison of when to use each, see AWS Savings Plans vs. Reserved Instances. For ongoing commitment optimization, see CloudZero’s commitment-based discount solution.

3. Automate non-production environment shutdowns

Development, staging, and test environments don’t need to run when engineers aren’t working. Automated shutdown policies that spin down non-production EC2 instances, RDS clusters, and associated resources during off-hours and weekends eliminate idle compute spend with no impact on production systems or engineering productivity.

The math is straightforward: a development environment running 24/7 costs roughly 3x more than one running only during business hours (8am–6pm weekdays). Applying automated schedules across multiple environments in multiple AWS accounts compounds the savings quickly. AWS Instance Scheduler automates this natively. Third-party tools like CloudZero offer more granular control across complex account structures.

4. Eliminate idle and orphaned resources

Every AWS environment accumulates waste over time: unattached EBS volumes from terminated instances, orphaned Elastic IP addresses, snapshots from projects that concluded months ago, load balancers with no registered targets, and RDS instances running at single-digit utilization. None of these require architectural changes to eliminate, they’re clean cuts with zero operational impact.

A systematic idle resource audit using AWS Trusted Advisor, Cost Explorer, or a third-party tool surfaces immediate savings within hours. The cadence matters as much as the initial sweep: organizations that run monthly idle resource reports prevent the accumulation that makes quarterly audits feel overwhelming.

5. Optimize S3 and data transfer costs

Storage and data transfer are consistently underoptimized categories in AWS cost reduction programs. S3 storage costs vary by storage class, from S3 Standard ($0.023/GB/month) down to S3 Glacier Deep Archive ($0.00099/GB/month) for data that’s rarely accessed.

Data that hasn’t been touched in 30 days and doesn’t need immediate retrieval has no business sitting in Standard storage. S3 Lifecycle policies automate this transition without manual intervention.

Data transfer (egress) costs are a separate and frequently overlooked line item. AWS charges $0.09/GB for data transferred out to the internet from most regions.

Inter-region transfers add another $0.02/GB. Reviewing transfer patterns and restructuring data architecture to minimize unnecessary egress, co-locating services in the same region, using CloudFront for content delivery, caching frequently accessed data, delivers measurable savings with no performance tradeoff.

See CloudZero’s S3 storage cost guide for a full breakdown of storage class pricing.

6. Build cost allocation that connects spend to the business

The strategies above reduce waste at the resource level. This one changes how the organization manages cost at the business level, and it’s what separates organizations that sustain AWS cost optimization from those that repeat the same reduction cycles every quarter.

AWS cost allocation means attributing spend to the teams, products, features, or customers generating it, not just viewing totals by service or account. When an engineering team can see their AWS cost per week, and how that changes when they launch a new feature or provision additional resources, cost becomes part of the design conversation rather than a surprise at month end.

Effective cost allocation needs a consistent tagging strategy, clear ownership policies, and tools that handle untaggable spend (shared infrastructure, Kubernetes, managed services) that tags alone can’t cover.

See CloudZero’s guide to AWS cost management best practices for a step-by-step allocation approach.

7. Implement real-time anomaly detection

A misconfigured service or a forgotten development environment can generate thousands of dollars in AWS spend before it surfaces in a monthly report. Real-time cost anomaly detection surfaces unusual spend patterns within hours, giving teams a chance to investigate and remediate before costs compound.

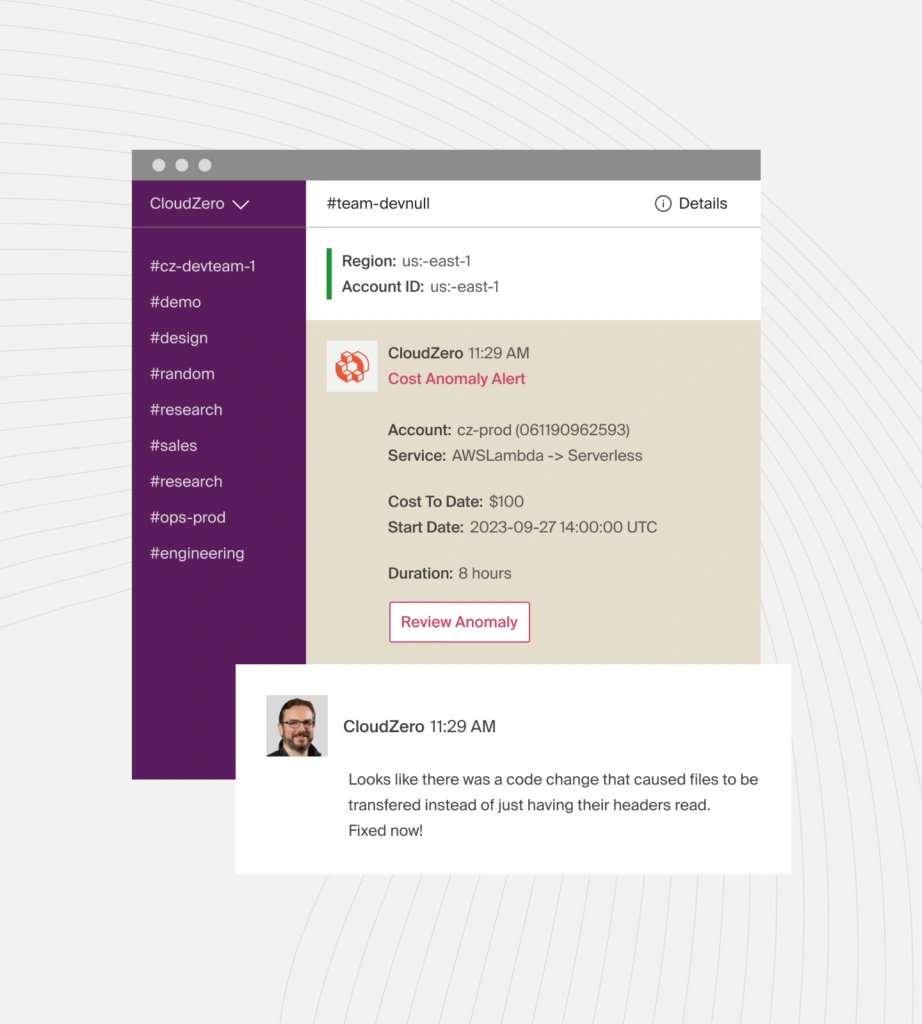

Effective anomaly alerts are specific: they identify the service, account, team, and likely cause, not just a generic cost spike notification. AWS Cost Anomaly Detection provides native alerting.

But CloudZero’s anomaly detection adds business context, showing not just that costs spiked, but which team or product the anomalous spend belongs to.

What Are The AWS Cloud Cost Optimization Tools?

AWS cost optimization tools span native AWS services, commitment management platforms, and third-party cost intelligence tools. No single platform covers everything. Most mature programs combine native tools for rate optimization with third-party tools for attribution and unit economics.

Here’s a quick overview:

Tool | Type | Best for | Key limitation |

Native | Spend visualization, RI/SP recommendations | Service-level only, no team/product attribution | |

Native | Rightsizing, idle resource identification | Recommendations require manual action | |

Native | EC2, Lambda, EBS rightsizing recommendations | Compute-focused only | |

Native | Spend spike alerts | No business context on anomalies | |

Native | Budget thresholds and alerts | Reactive, not predictive | |

Third-party | Cost allocation by team/product/customer, unit economics, anomaly context | Cloud-focused (not on-premise) | |

Third-party | Multi-cloud visibility, SaaS management, ITAM, enterprise governance | Broader scope adds implementation complexity | |

Third-party | AWS Well-Architected alignment, ML-driven rightsizing, Spot Instance automation | Deepest automation is AWS-native |

Note: Native AWS tools are the right starting point. They’re free, deeply integrated, and sufficient for basic visibility and rightsizing recommendations. Their structural limitation is that they show spend by AWS service and account, not by the business dimension that matters: which team, product, or customer is responsible for each dollar.

What Are The Best AWS FinOps Tools?

AWS FinOps tools extend beyond cost visibility to bring financial accountability into engineering workflows. The FinOps Foundation defines the practice as enabling organizations to get maximum business value from cloud spend, which requires more than dashboards. It requires shared ownership of cost data across engineering, finance, and business teams.

The most effective AWS FinOps programs use tooling that surfaces cost data to the engineers making provisioning decisions, not just to a central finance or FinOps function. When teams can see their cost per deployment, per feature, or per active user, cost optimization happens where infrastructure decisions are actually made.

See CloudZero’s FinOps tools guide for a full breakdown.

What Are The Best Practices For AWS Cloud Cost Optimization?

Here are some practical tips on how to optimize AWS costs:

Prioritize visibility before optimization. You can’t optimize what you can’t see. Establish full cost attribution by team, product, and environment before targeting waste — without attribution, optimization efforts frequently eliminate spend that was generating value alongside spend that wasn’t.

Tag consistently and enforce it at deployment. Retroactive tagging campaigns are expensive and never fully successful. Tag policies enforced before resources provision prevent the attribution gaps that make cost allocation unreliable. Every resource should carry team, environment, application, and cost center tags at minimum.

Cover stable workloads with commitments. Any workload running consistently at predictable utilization for more than a few months is a candidate for Reserved Instances or Savings Plans coverage. The savings are significant — up to 72% vs. on-demand — and commitment risk is manageable when coverage decisions are based on real utilization data.

Automate non-production shutdowns. This is the fastest, lowest-risk AWS cost reduction available for most organizations. Non-production environments running off-hours and weekends with no engineering activity are pure waste. Automation takes hours to implement and generates immediate savings.

Track cost per unit, not just total spend. Total AWS spend increasing isn’t necessarily a problem — it may mean the business is growing. The metric that reveals whether AWS spend is efficient is cost per unit of business output: cost per customer, active user, feature, team, or project. When those numbers hold steady or improve as the business scales, AWS cloud cost optimization is working.

Review commitment coverage monthly. Reserved Instances and Savings Plans purchased once and left unmanaged frequently drift out of alignment as workloads evolve. Monthly coverage reviews catch utilization gaps before they generate sustained on-demand overspend.

Why Is Cost Attribution The Foundation Of AWS Cost Optimization?

Every AWS cloud cost optimization strategy depends on one foundation: knowing what your AWS spend actually is, at a level of granularity that makes it actionable.

Native AWS tools show spend by service. That’s a starting point, not a destination. The organizations that sustain cost optimization over time are the ones that connect AWS billing data to business context, knowing not just that EC2 costs increased, but which team’s workloads drove the increase, which product feature they support, and if the business outcome justifies the spend.

CloudZero connects directly to your CUR via a read-only cross-account IAM role, ingests that line-item data, and maps it to business dimensions that the raw report can’t produce on its own.

The result: every dollar in your AWS bill becomes attributable to the business context that generated it, not just the AWS service it ran on.

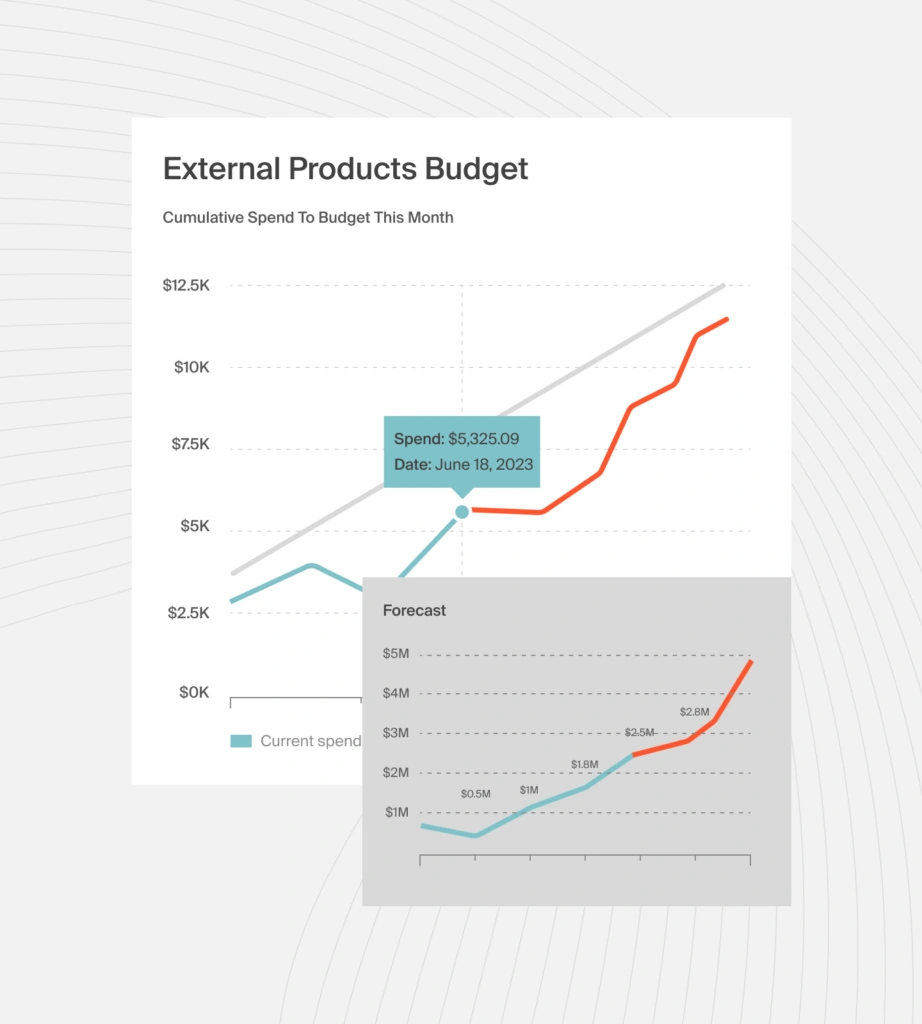

Native AWS budget tools forecast spend by service and alert you when you approach a threshold. What they can’t tell you is which team, product, or customer is driving that trajectory, or why.

CloudZero closes that gap in two ways. First, CloudZero Budgets lets organizations track AWS cost forecasting against custom groupings, not just by AWS service or account. Second, CloudZero runs forecast models directly on historical CUR data to generate demand forecasts for future cloud resource consumption and spending patterns, updated continuously. When spend starts trending above forecast, CloudZero sends automatic Slack alerts tied to the specific business unit responsible, not a generic account-level notification.

The result is AWS forecasting that finance and engineering teams can act on together, not a spend projection that lands in a finance dashboard days after the pattern has already shifted.

CloudZero’s customers see measurable AWS savings fast. Drift cut $2.4 million from their AWS bill. Upstart reduced cloud costs by $20 million. Demandbase lowered AWS spend by 36% in a single year, a result that directly supported $175 million in new financing. You can too.  to see CloudZero in action with your own AWS data, or start with a free cloud cost assessment to find your biggest savings opportunities today.

to see CloudZero in action with your own AWS data, or start with a free cloud cost assessment to find your biggest savings opportunities today.